A system for classifying affect from gesture using Laban Movement Analysis.

With the availability of new hardware to incorporate overlooked modalities for interactions with computers, the fields of User Experience design and Human-Computer Interaction are witnessing a resurgence of interest in gestural interfaces. Technologies like Kinect, Leap Motion, Hololens, etc. are making it possible to reliably capture movement data from users. Simultaneously, popular concern about the disembodied experience of using computers is driving the search for media—including Virtual and Augmented Reality—that incorporate more of the expressive human body. The quest to create inspiring interactions with technology requires the exploration of different modalities for communication in order to identify user intention, communicate insights and options, and elicit affect.

Most existing gestural interfaces attempt to categorize and interpret movements based on their linguistic value, relying primarily on form-based mechanical characteristics of a movement for classification. It is clear, however, that a movement which is mechanically the same (follows approximately the same trajectory), perhaps a hand waving motion at eye level, for example, can be performed with different qualities of movement to communicate different emotional intentions.

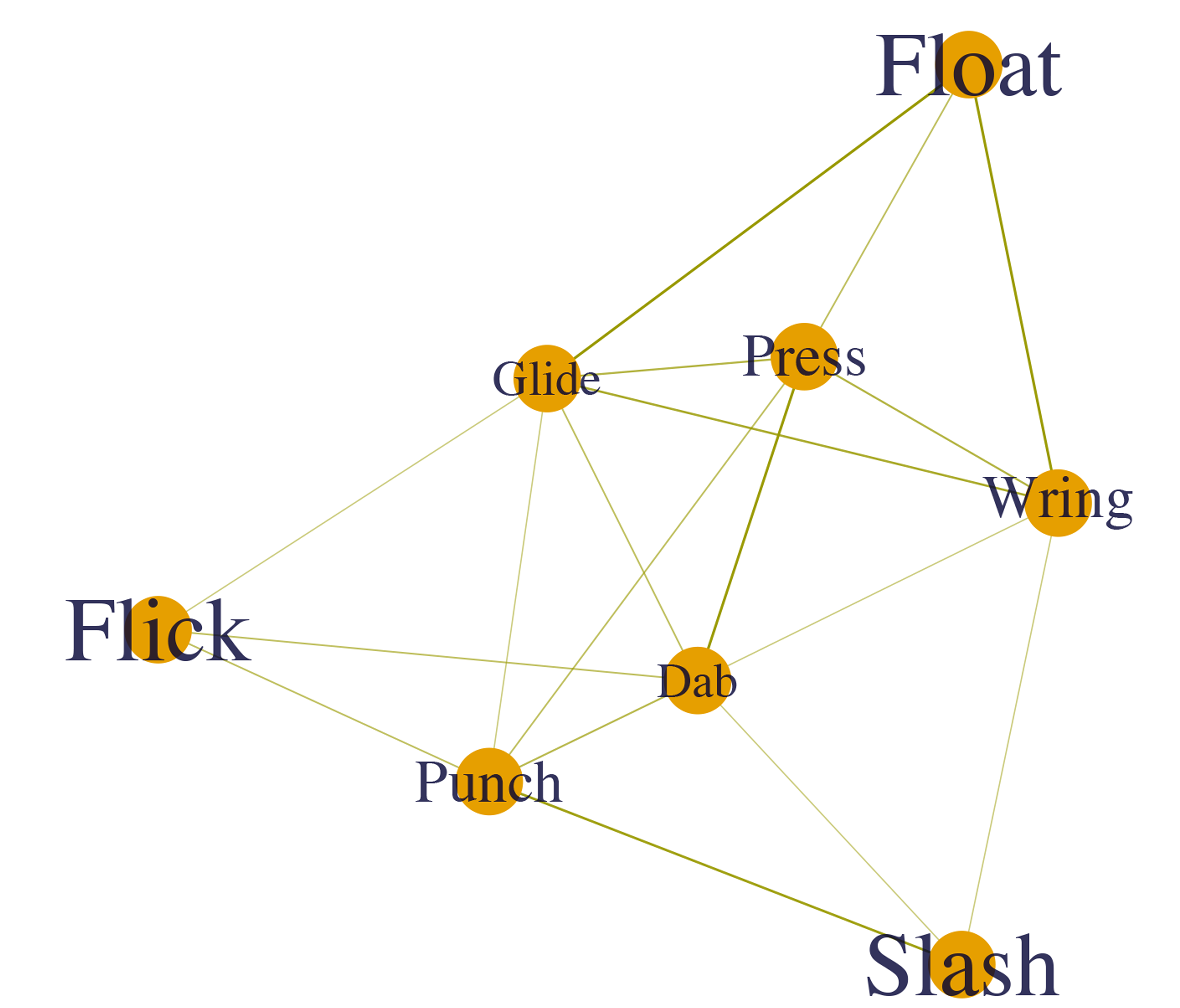

Leading movement analyst Rudolf von Laban created a set of expressive qualities called the Laban Efforts: Punch, Slash, Dab, Wring, Press, Flick, Float, and Glide–to classify the emotional intention of the movement. Each effort is a unique combination of values for how the movement occurs in time and space with a particular weight. Many researchers have unsuccessfully used these qualities, along with other properties of movement in Laban Movement Analysis, as guiding principles in attempting to classify affect from body movement. In this project, Alan Ni and I attempted to shed light on where this research may be going wrong. Are the features being used in the models irrelevant to Laban Effort classification? Is the data of low quality? Or is the relationship between the Effort system and expressions of affect insignificant?

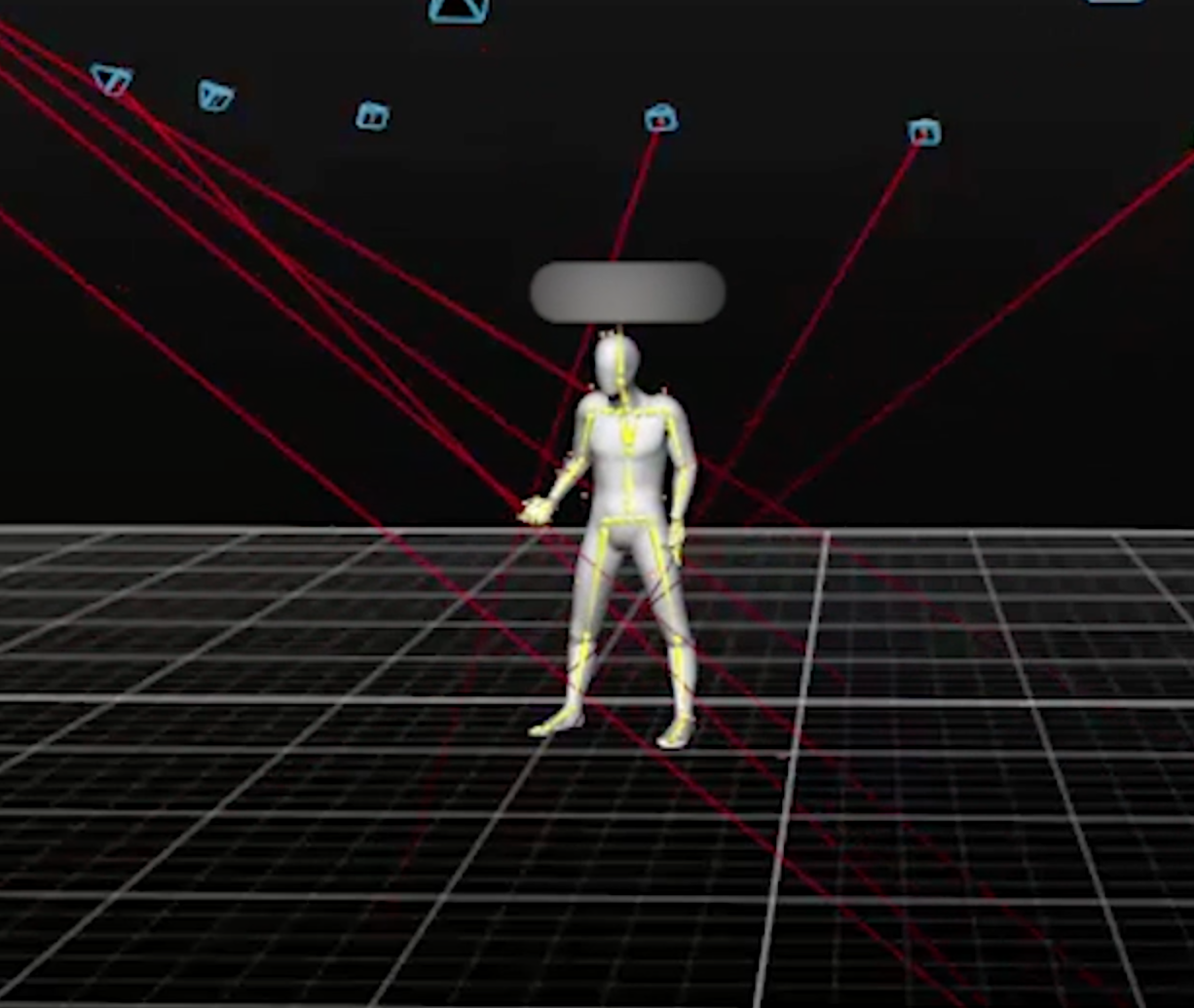

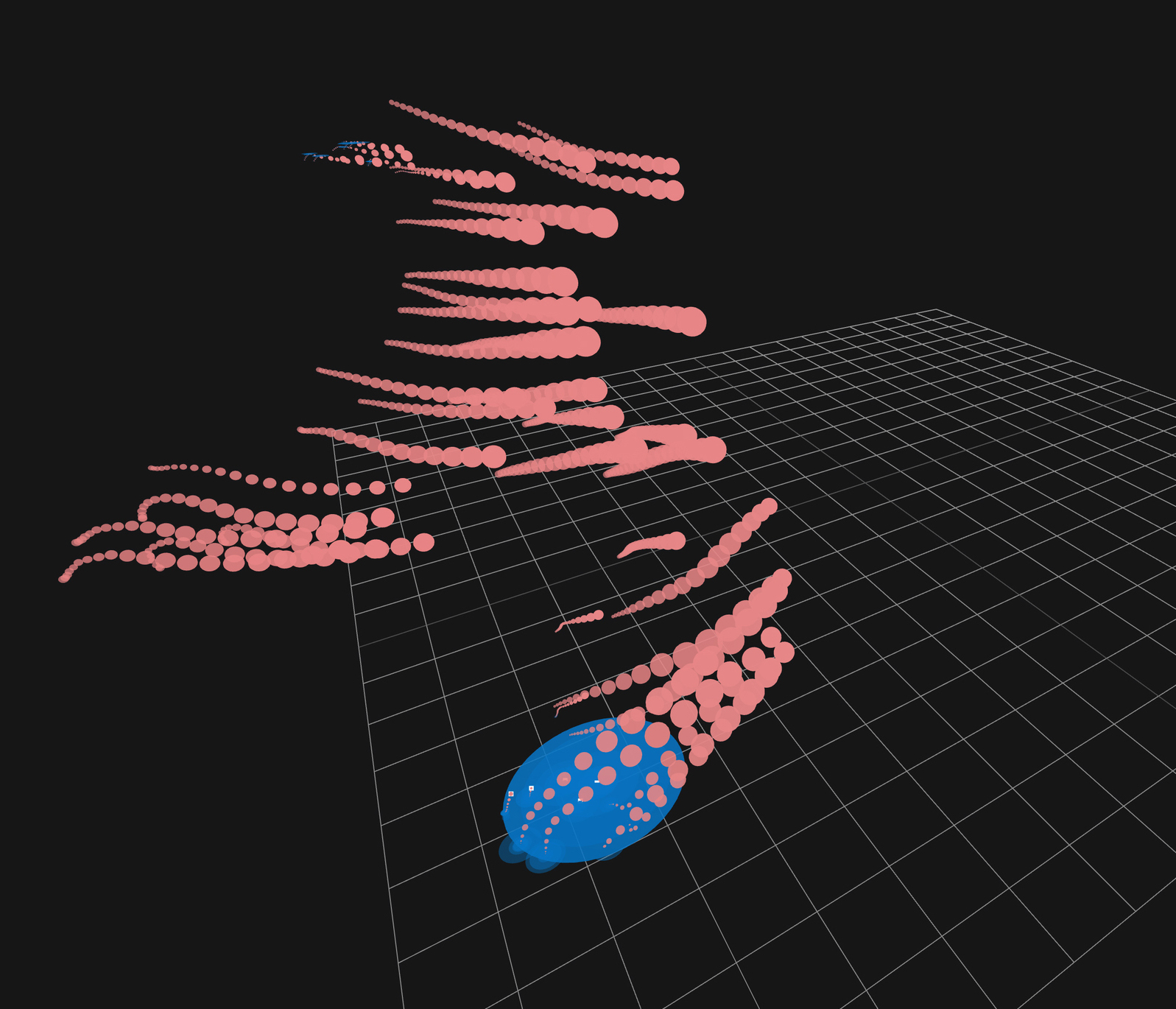

Informed by the attempts of researchers before us, we collected movement data from individuals in various elicited states of affect (in the valence-arousal space), manually classified the Laban Efforts of algorithmically segmented movements using an application we developed to quickly label the movement data, and devised various models–beginning with KNN and SVM–to classify Effort and affect, experimenting with different measured properties and computer macro-features. Details are available in the paper below.